Our world is changing. From the COVID pandemic that came and is almost gone, to the unexpected European conflict, huge drought spells around the world, and cargo ships blocking and disrupting the supply chain around the globe – it seems like there isn’t a minute’s rest. Sometimes change is hard, but sometimes it’s an exciting evolution.

The tech world is also constantly changing. This is something that we all learned to expect, and by now, take it naturally – from search engines and video streaming services to online banking, and so on.

The e-commerce world is no different.

It has never been easier to mount a new e-commerce venture. Even if you are a small business, it’s now easier to create your own new digital presence and compete with large, established corporations.

This ease of action is made possible by new technologies, new platforms, and new abstractions.

The History of Data Interchange

Since the 70s, the protocol or standard of choice to exchange information was the EDI (Electronic Data Interchange). This protocol was devised to describe invoice orders throughout various industries, spawning quite a few new subsets of standards. Much like the XML standard, where everyone could have their own implementation, the EDI was no different (look for X12, EDIFACT, etc.)

The National Institute of Standards and Technology defined electronic data interchange as “the computer-to-computer interchange of a standardized format for data exchange. EDI implies a sequence of messages between two parties, either of whom may serve as originator or recipient.

It is important to note that EDI is not exclusively used in the e-commerce world – different standards can be found in Transport (UN/EDIFACT), Automotive (ODETTE/VDA), Medical (HIPAA), etc.

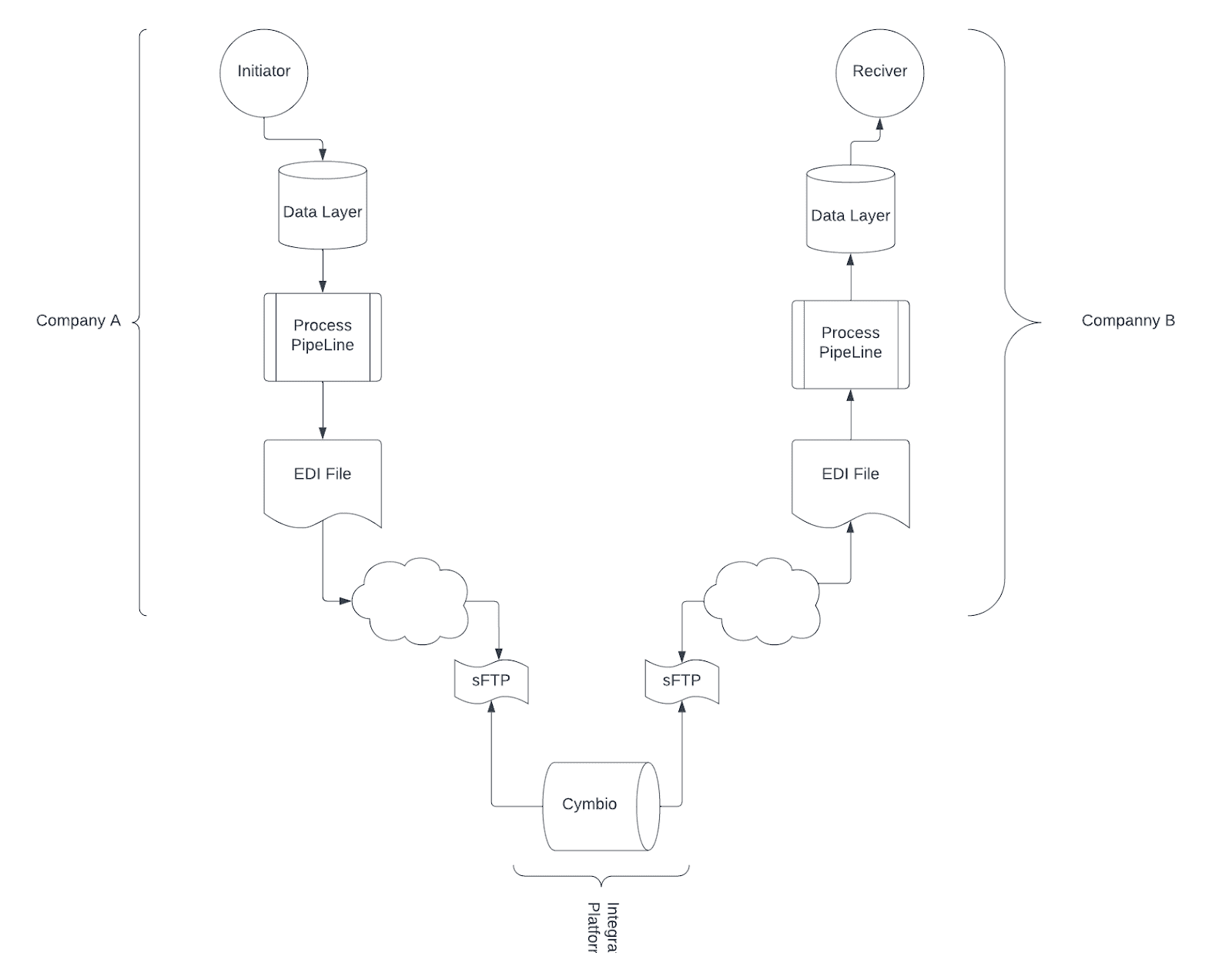

What was once accomplished through fax gradually advanced to emails, and with the further advancement of the digital world, evolved into robots and servers, generating text files and uploading them to an agreed-upon FTP/SFTP folder, or a VAN (Value Added Network). From here, a different listener would set a hook that can be triggered on the upload of this new file, read the incoming file, parse it, and trigger any business workflow it needed according to said file. And if further needed, will reply with his own EDI text file to be transmitted with acknowledgment or any other further information needed back to the initiating party.

Transferring files in this way is a very cumbersome and lengthy process. It has quite a lot of moving parts, and as such, is subjected to errors. As we move more and more into faster trading flows, this puts a significant throttling on the speed at which companies can react.

Machine-to-Machine Communications – API

Entering the next phase of machine-to-machine communications is API. Using web based APIs, we have switched from an async process to a faster flow using (first) custom XML. At first, everyone was using XML, and it quickly became clear that a standard was needed. A few years passed, and a new standard was born – the (now) infamous SOAP.

The Simple Object Access Protocol is a messaging protocol that sits on top of XML and attempts to bring structure to how a message is described. It is intended to be used as an XML-RPC (XML Remote Procedure Call), a messaging protocol layer for distributed systems (such as the world wide web), and consists of 4 basic types – Envelope, Body, Header, and Fault element.

The adoption of this was not that fast. Since, well, it was not really as simple as the name implies, and it added a level of verbosity to the sometimes overwhelming message.

All praise JSON.

JSON, as we know, stands for JavaScript Object Notation. We all agree that the fact that we have JavaScript in the name does not mean that we can’t use it in other languages, and it proves once more that there are only two hard things in Computer Science: cache invalidation and naming things (Phil Karlton).

Everybody likes JSON, as it’s a less verbose format. XML permutation, is still readable by humans, and the actual message payload is significantly less than that of its counterpart XML/SOAP, moving us to a larger payload for less time.

But we are still left in the API domain. And for a long time, this was and still is the most popular means of communication between servers. Facebook GrapthQL is giving a strong fight, and gRPC and its binary payload are also popular, but still, the main data transport is in JSON on top of the API.

Now, we see more and more a new emergence, where strong technical companies opt to move away from the API approach and embrace Event-Driven Communication as tools such as Apache Kafka, Google PubSub, and Amazon SQS.

So what is an event-based system?

An event can be described as an agent or action designed to provide a change in state. It’s easy to think of an event (in our context of e-commerce) as what happens when a customer adds or removes an item from his shopping cart. Each time the user clicks on the ‘Add item’ button, an event is sent to the server with the item added and its quantity (for example). All events are stored in the order that they were received (First-In, First-Out – FIFO), and when we want to show the user the contents of his cart, we loop the added item event and calculate the current state.

An event system is comprised of:

- Event producer – responsible for creating and publishing the event

- Mediator/Broker – the part in the system responsible for propagating the events

- Event consumer – the entity interested in this event, who will initiate some flow on receiving the said event

So when we are comparing classic API vs event, in the API mode, we have a request followed by a response – synchronous communication. When dealing with events, we publish an event, and when the system is ready, it will process it – an asynchronous flow.

When we send a large amount of data in a classic API request, we need to wait until the receiving system has loaded all the data. This has a huge impact on memory, while in an events system, we can stream the data, and the receiving end will read the stream (incoming events) that are smaller in size, making the memory footprint much smaller – hence → scale.

Using an event-driven system was and still is the number one go-to solution when talking about scaling traffic to your system. This approach allows for a “fire and forget” approach, where you publish an event, knowing that it will be picked according to the end-system availability. This is compared to the classic API approach, where you force the system to respond to you, or better still, expect them to, not knowing what their current availability is. This forces you to add request failures and implement short circuit mechanizing, helping us understand that while in API mode, my system is more brittle and requires a lot of supporting flows and pipelines.

This approach worked well for the last 10-20 years, but the world is changing and evolving. The digital footprint of a company and the traffic that the e-commerce domain is experiencing today is ten times more than it once was, and your system and architecture must account for this.

Adding more machines behind a load balancer will only get you so far. Event-based systems, both in and out of your system, are the way to go.

Today, outside of authentication flows, APIs cannot operate on a fire and forget principle – an event-based system is a good and strong option.

Cymbio at Work

We, at Cymbio, are operating as a drop ship and marketplace platform. We have hundreds and hundreds of integrations with retailers on one side and suppliers and warehouses on the other. Our system needs to be able to support many protocols so we can talk with all the systems in existence. We have to understand custom XMLs, SOAP, JSON, and EDI. We must know how to operate with classic APIs and file transfer protocols, allowing us to be in the unique position to understand what is going on, on the IT side of the drop ship world. Several of our biggest customers have switched to an event-based system, and when I say biggest, I mean in GMV and features. More features and traffic means more tech-savvy and we can say that the direction is clear.

Developing a robust and flexible system that can answer all of these features and capabilities means that we need to continuously update how we are developing. Creating an integration platform that is agnostic to the communication paradigm and protocols pushes us to go forward and adopt a ports and adapters architecture (sometimes referred to as hexagonal architecture).

Moving to this area is very exciting for us. We hope to be able to support a wider variety of platforms and business opportunities while using advanced and cutting-edge technologies that help us have strong developer retention and commitment – which is a win-win, both for our business and our internal engineering culture.

Conclusion

In this article, I’ve referenced the shift that we see today in the e-commerce business to the business domain. We understand that this increase in business means an increase in traffic and data and the need to scale is ever more important.

The API approach, albeit very good for some, is becoming a bottleneck for others – and if you want to be big, you must adopt big changes.

Adopting event-based API allows companies to scale better and easier, helping to promote a fast feature adoption since the contract between clients is easily pushed forward.

Written by: Eyal Mrejen, VP Engineering @Cymbio